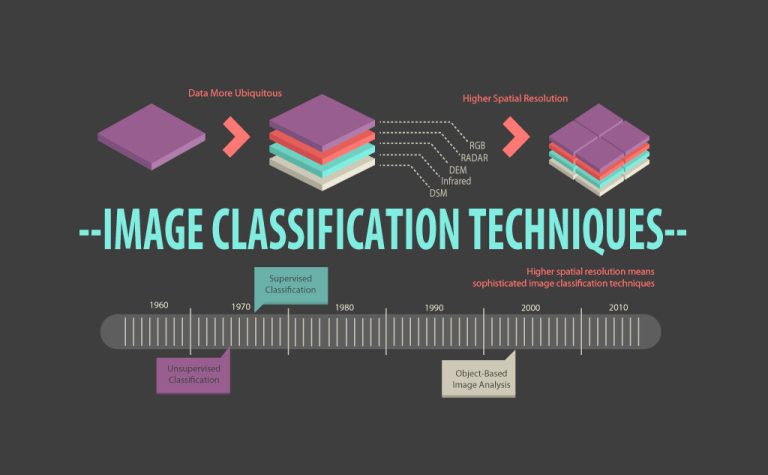

Image Classification Techniques in Remote Sensing [Infographic]

“Image classification is the process of assigning land cover classes to pixels. For example, classes include water, urban, forest, agriculture, and grassland.”

What is Image Classification in Remote Sensing?

The 4 main types of image classification techniques in remote sensing are:

- Unsupervised image classification

- Supervised image classification

- Object-based image analysis

- Deep learning object detection

Unsupervised and supervised image classification are the two most common approaches.

However, object-based classification and deep learning has gained more popularity because it’s useful for high-resolution data.

Jump To: Unsupervised classification | Supervised classification | Object-based image analysis | Deep learning object detection

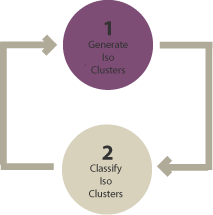

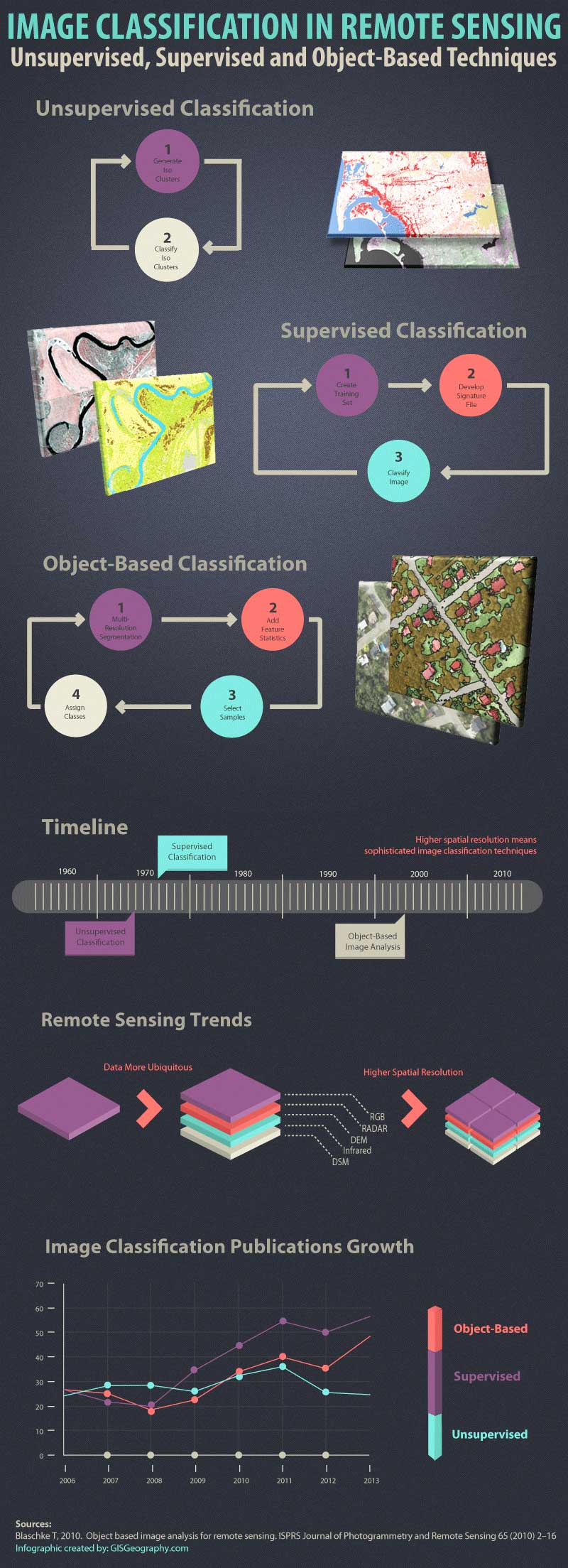

1. Unsupervised Classification

In unsupervised classification, it first groups pixels into “clusters” based on their properties. Then, you classify each cluster with a land cover class.

Overall, unsupervised classification is the most basic technique. Because you don’t need samples for unsupervised classification, it’s an easy way to segment and understand an image.

The two basic steps for unsupervised classification are:

- Generate clusters

- Assign classes

Using remote sensing software, we first create “clusters”. Some of the common image clustering algorithms are:

- K-means

- ISODATA

After picking a clustering algorithm, you identify the number of groups you want to generate. For example, you can create 8, 20, or 42 clusters. Fewer clusters have more resembling pixels within groups. But more clusters increase the variability within groups.

To be clear, these are unclassified clusters. The next step is to manually assign land cover classes to each cluster. For example, if you want to classify vegetation and non-vegetation, you can select the clusters that represent them best.

READ MORE: Supervised and Unsupervised Classification in ArcGIS

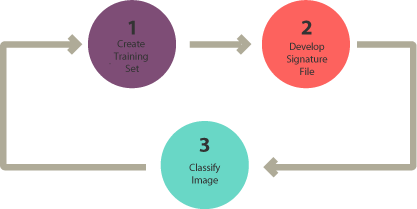

2. Supervised Classification

In supervised classification, you select representative samples for each land cover class. The software then uses these “training sites” and applies them to the entire image.

The three basic steps for supervised classification are:

- Select training areas

- Generate signature file

- Classify

For supervised image classification, you first create training samples. For example, you mark urban areas by marking them in the image. Then, you would continue adding training sites representative in the entire image.

For each land cover class, you continue creating training samples until you have representative samples for each class. In turn, this would generate a signature file, which stores all training samples’ spectral information.

Finally, the last step would be to use the signature file to run a classification. From here, you would have to pick a classification algorithm such as:

- Maximum likelihood

- Minimum-distance

- Principal components

- Support vector machine (SVM)

- Iso cluster

As shown in several studies, SVM is one of the best classification algorithms in remote sensing. But each option has its own advantages, which you can test for yourself.

READ MORE: 15 Free Satellite Imagery Data Sources

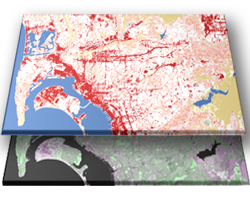

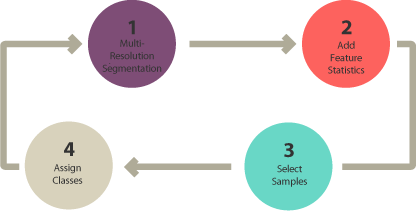

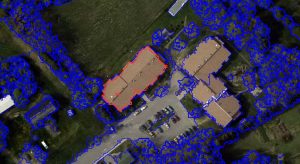

3. Object-Based Image Analysis (OBIA)

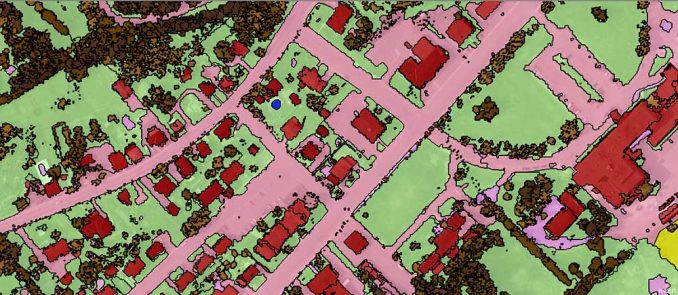

Supervised and unsupervised classification is pixel-based. In other words, it creates square pixels and each pixel has a class. But object-based image classification groups pixels into representative vector shapes with size and geometry.

Here are the steps to perform object-based image analysis classification:

- Perform multiresolution segmentation

- Select training areas

- Define statistics

- Classify

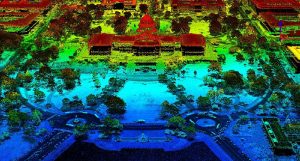

Object-based image analysis (OBIA) segments an image by grouping pixels. It doesn’t create single pixels. Instead, it generates objects with different geometries. If you have the right image, objects can be so meaningful that it does the digitizing for you. For example, the segmentation results below highlight buildings.

The 2 most common segmentation algorithms are:

- Multi-resolution segmentation in eCognition

- The segment mean shift tool in ArcGIS Pro

In Object-Based Image Analysis (OBIA) classification, you can use different methods to classify objects. For example, you can use:

SHAPE: If you want to classify buildings, you can use a shape statistic such as “rectangular fit”. This tests an object’s geometry to the shape of a rectangle.

TEXTURE: Texture is the homogeneity of an object. For example, water is mostly homogeneous because it’s mostly dark blue. But forests have shadows and are a mix of green and black.

SPECTRAL: You can use the mean value of spectral properties such as near-infrared, short-wave infrared, red, green, or blue.

GEOGRAPHIC CONTEXT: Objects have proximity and distance relationships between neighbors.

NEAREST NEIGHBOR CLASSIFICATION: Nearest neighbor (NN) classification is similar to supervised classification. After multi-resolution segmentation, the user identifies sample sites for each land cover class. Next, they define statistics to classify image objects. Finally, the nearest neighbor classifies objects based on their resemblance to the training sites and the statistics defined.

READ MORE: Nearest Neighbor Classification Guide in eCognition

4. Deep Learning Object Detection

I’ve seen deep learning object detection grow tremendously in remote sensing. Neural networks can find objects in imagery like buildings and trees because they learn from large data sets.

For example, the Esri Analytics team has released a set of deep learning models as part of the Living Atlas of the World. Here, you can find models for object detection:

- Car/ship detection

- Tree segmentation

- Land fiber classification

- Agricultural field delineation

- Building footprints detection

- Wildfire delineation

- …And elephants?

And the list goes on…

Esri mostly just takes advantage of Meta’s Segment Anything Model (SAM) algorithms. Nevertheless, it’s exciting because deep learning is the future of remote sensing. Accuracy is much higher. On top of that, you can repeat it based on the training data available.

Which Image Classification Technique Should You Use?

Let’s say you want to classify water in a high spatial resolution image.

You decide to choose all pixels with low NDVI in that image. But this could also misclassify other pixels in the image that aren’t water. For this reason, pixel-based classification like unsupervised and supervised classification gives a salt and pepper look.

Humans naturally aggregate spatial information into groups. Multiresolution segmentation does this task by grouping homogenous pixels into objects. Water features are easily recognizable after multiresolution segmentation. This is how humans visualize spatial features.

- When should you use pixel-based (unsupervised and supervised classification)?

- When should you use object-based classification?

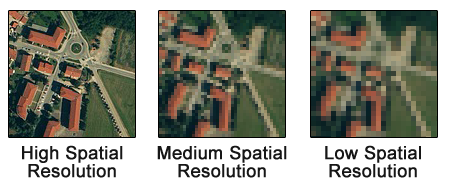

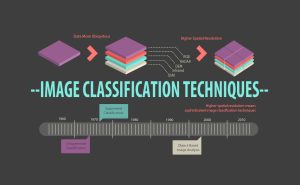

As illustrated in this article, spatial resolution is an important factor when selecting image classification techniques.

When you have a low spatial resolution image, both traditional pixel-based and object-based image classification techniques perform well.

But when you have a high spatial resolution image, OBIA is superior to traditional pixel-based classification.

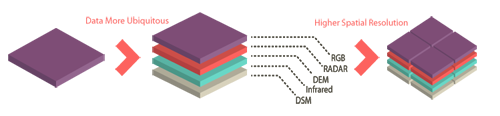

Remote Sensing Data Trends

In 1972, Landsat-1 was the first satellite to collect Earth reflectance at 60-meter resolution. At this time, unsupervised and supervised classification were the two image classification techniques available. For this spatial resolution, this was sufficient.

However, OBIA has grown significantly as a digital image processing technique.

Over the years, there has been a growing demand for remotely sensed data. Just check out our list which includes hundreds of remote sensing applications. For example, food security, environment, and public safety are in high demand for satellite imagery.

To meet demand, satellite imagery is aiming for higher spatial resolution at a wider range of frequencies. Here are some of the major remote sensing data trends that have emerged over the past several years.

- More ubiquitous

- Higher spatial resolution

- A wider range of frequencies (including hyperspectral)

But higher resolution images do not guarantee better land cover. The image classification techniques used are a very important factor for better accuracy.

Unsupervised vs Supervised vs Object-Based Classification

A case study from the University of Arkansas compared object-based vs pixel-based classification. The goal was to compare high and medium spatial resolution imagery.

Overall, object-based classification outperformed both unsupervised and supervised pixel-based classification methods. Because OBIA used both spectral and contextual information, it had higher accuracy.

This study is a good example of some of the limitations of pixel-based image classification techniques. But now, deep learning classification comes into play. I see it being applied everywhere. Even in security cameras.

Growth of Object-Based Classification

Pixels are the smallest units represented in an image. Image classification uses reflectance statistics for individual pixels.

There has been much growth in the advancements in technology and the availability of high spatial resolution imagery. But image classification techniques should be taken into consideration as well. The spotlight is shining on object-based image analysis to deliver quality products.

According to Google Scholar’s search results, all image classification techniques have shown steady growth in the number of publications. Recently, object-based classification and deep learning have shown the most growth.

If you enjoyed this guide to image classification techniques, I recommend that you download the remote sensing image classification Infographic.

References

1. Blaschke T, 2010. Object-based image analysis for remote sensing. ISPRS Journal of Photogrammetry and Remote Sensing 65 (2010) 2–16

2. Object-Based Classification vs Pixel-Based Classification: Comparative Importance of Multi-Resolution Imagery (Robert C. Weih, Jr. and Norman D. Riggan, Jr.)

3. Multiresolution Segmentation: an optimization approach for high-quality multi-scale image segmentation (Martin Baatz and Arno Schape)

4. Trimble eCognition Developer

Very useful material. Many thanks

Thank you. So wonderful.

Well written. Thanks a lot.

Very useful materials. I am currently working on Object-Based Classification through recognition software.

Very useful indeed. Thanks a lot

Thanks a lot very informative details are given

Thank you for this articles, it is very helpful.

Keep up your great work. Well articulated and extremely useful and understandable. Thank you

Thanks for this article. It is quite helpful. I have one question:

Don’t you think there only two classification ways? Supervised and non-supervised (and ok maybe semi-supervised…) And then both of them can work pixel-based or segment-based? And inside the segment-case we could find at the highest level the object-based? I say this because I have been working for example in a non supervised approach object-based. And this doesn’t fix much in the classification as it is presented in this article. Thanks. Pablo

With the aid of a diagram, describe the typical reflectance curve of a healthy vegetation in the visible and near-infrared portions of the electromagnetic spectrum.

Here’s a visual on the reflectance for NIR and red – but not a reflectance curve. https://gisgeography.com/ndvi-normalized-difference-vegetation-index/

Thank you for your nice guide.

Nice piece !

Well clarified, really appreciate

Thanks. This is a very good guide for a beginner and refreshing to all

i need to know that why classification is remote sensing is not 100 % accurate

?

well explained…thanks!

absolutely quality stuff, thank you for the insight!

Thank you. You took me out of the maze..

Nicely done sir, keep this site going… becoming one of the best out there…

Bravo brother. So informative.

thanks a lot keep up the good work

well explained, thanks

Very useful! Thanks