Is GIS Really Under the Hood of Self-Driving Cars?

Will GIS thrive in a world of driverless cars?

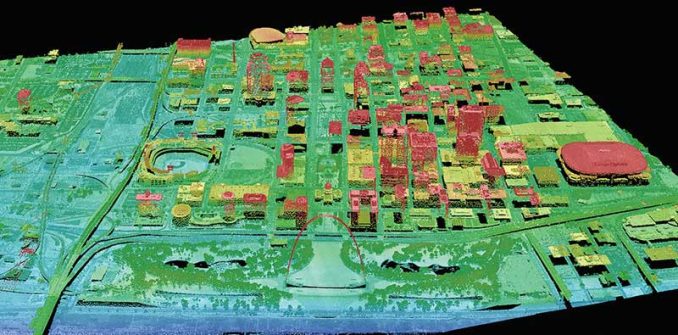

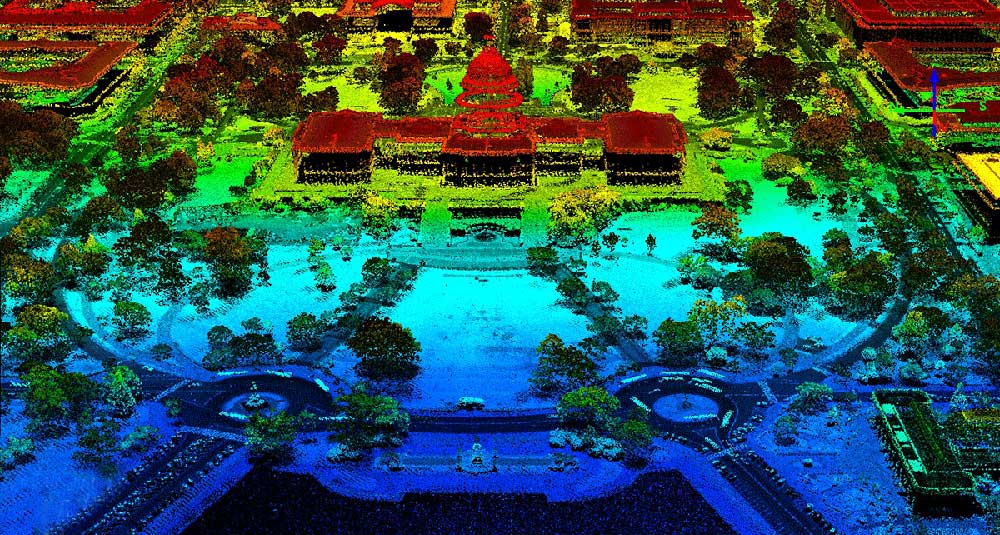

Driverless cars see the world by overlaying millions of sensor returns (about 27,000 light points a second) that they collect into an extremely precise 3D map.

For every single movement, the car makes, it calculates based on how this point cloud data is located in geographic space.

What is the potential for GIS technology to integrate into the new frontier of driverless vehicles?

And vice versa, how can GIS benefit from a world of driverless cars?

Perception of its environment with LiDAR and SLAM

First, let’s understand the brain of driverless cars. For each movement the car makes, it uses LiDAR, radar, cameras, and position estimators that constantly scan in 360°.

Combined with SLAM (Simultaneous Localisation and Mapping), cars map their surroundings in real-time orientating themselves based on sensor input. This view is far superior to what humans can see.

Even though humans can perceive their surroundings with ease, for a computer it is an incredibly difficult challenge. For example, humans can recognize pedestrians, traffic lights, and crosswalks. Also, humans can anticipate movement from cyclists and police officers through simple hand gestures.

This is why driverless cars use 360° LiDAR sensors mounted on the vehicle, which gives a full perspective of its surroundings. As point cloud data is constantly fed into machine learning (ML) algorithms, driverless cars begin to make sense of noisy data. This is the underlying brain of the vehicle that can extract features from the road.

Depending on what’s been labeled to train the neural network, this determines the success of how well it can detect objects on the road. Essentially, the more training in various situations, the better it is at discerning say pedestrians from wildlife.

A precise geometric HD road network map

The growing trend is that driverless cars will only use GPS to provide a rough location for the vehicle because of its unreliability. For example, Google’s self-driving car (Waymo) can’t rely on GPS because of its design principles.

So can driverless cars function solely on sensor data without external information? In other words, does it need pre-loaded 3D maps at all to be fully functional?

There are a lot of reasons to believe that autonomous vehicles require accurate maps because of safety concerns. For example, if heavy snow or rain covers lane markings, vehicles need curbs and lane size to fall back on.

In fact, companies like HERE Maps and TomTom are already starting to build high-definition (HD) maps delineating lane markings for drivable areas. In turn, self-driving cars use this to know precisely where they are and heading.

Dynamic routing for any situation

If driverless cars want to travel from point A to point B, it needs the following 3 things:

- An existing road network that restricts where they can travel.

- Accurate geocoded addresses within it to know where they are going.

- And a powerful routing algorithm that takes you from A to B.

For navigation purposes, cars have to compute the time-optimum or shortest path. When situations change on the road, they need to dynamically calculate a secondary path.

They need an operational environment in the form of maps to interact with sensor input. After all, it’s GIS running in the background for deciding optimal paths. But sometimes the optimal route isn’t always the shortest. It’s the one with the least traffic.

Avoiding traffic jams and following the rules of the road

As millions of users connect with Waze, unknowingly they perform an important benefit to society. That is, they map out road networks, turn restrictions, and traffic jams.

Unlike ever before, companies like HERE maps and Waze understand traffic congestion in cities through crowdsourcing. All in a single map, our daily commutes can avoid traffic delays by enriching the GPS navigation system with location data.

Over time, GIS can optimize routes by understanding historical traffic patterns coupled with real-time traffic data. For any given day, it can forecast the estimated traffic time and improve the overall driver experience.

Building smarter cities with the Internet of Things (IoT)

As driverless cars start patrolling the road, we essentially have surveying equipment constantly building topographic maps. In great detail, they capture roadside objects, buildings, and cityscapes.

The first thing smart cities need is an inventory of everything they have. Because we can get an accurate inventory of city assets, this is the first step to improving infrastructure coordination.

With more vehicles on the road, this means the more connected and efficient it becomes. Now, we enter the realm of the Internet of Things (IoT). With only the perception of a single vehicle, it’s always going to be reactive. We can’t have any pre-planning of any sort. But a connected network of vehicles is proactive before it encounters snags in the network.

For example, the Internet of Things will understand where traffic congestion occurs. Not only will this relay to your vehicle, but city planners have the information to improve infrastructure. Also, it will incorporate geofencing into your daily routine. Whether it’s for security, retail, or delivery, geofencing gives real-time alerts and increases awareness.

Is GIS the engine under the hood for self-driving cars?

Nowadays, people rely on maps to take them to their destinations. And so will autonomous vehicles because of their inherent spatial nature.

But they will scan their environment and overlay them on a pre-existing map to determine where to go.

Despite the advancement in SLAM technology, currently, the dilemma is obtaining precise accuracy and maintaining updated maps.

As driverless cars need maps that are free of errors, and continuously updated with absolute spatial accuracy, we can’t detach their pre-loaded map from the outside world they capture.

Autonomous vehicles are decades away. Geocoded addresses are worthless: where is the front door, where is the back door, maybe there is no door, maybe I don’t want to be dropped off at the door (even with the fact that geocoded addresses are not very accurate outside of highly dense (urban) areas

Who will provide the coordinates, how will the data be securely stored, who will ensure that the data in valid, e.g. an angry employee places his business’s location on train tracks

What happens if the car gets lost! Who will guide it correctly? I can think of infinite scenarios, such that I don’t expect to be in an autonomous car in my lifetime

How will satellite gps in self driving cars compensate for propagation delay or loss of signal because of weather condition?